The evolution of vehicular automation and space robotics represents one of the most fascinating and complex technological convergences in contemporary engineering. Although terrestrial autonomous vehicles (AVs) and planetary rovers operate in diametrically opposed environmental domains, their algorithmic foundations, sensory perception paradigms, and decision-making architectures share deep intersections. On Earth, autonomous driving aims to process massive amounts of data in real time at high speeds. These vehicles navigate structured or semi-structured environments characterized by highly stochastic traffic, dynamic obstacles, and stringent passenger safety requirements.

In deep space, the autonomous exploration of rovers is dictated by radically different paradigms. The primary challenges include the absence of real-time communication due to trans-planetary transmission delays, which can exceed 20 minutes for Mars. Furthermore, rovers operate in completely unstructured environments and face extreme limitations in computational capacity, thermal power, and debilitating exposure to cosmic radiation.

Despite decades of optimistic forecasts predicting the imminent mass adoption of fully autonomous vehicles (SAE Level 5), engineering reality has proven that the transition to pure autonomy is a slow and difficult path. Similarly, robotic space exploration has experienced a gradual transition from direct teleoperation to the execution of programmed sequences, finally arriving at systems capable of “thinking while driving.”

This analysis deconstructs the hardware and software architectures governing these two worlds. It exhaustively explores what the automotive industry is transferring to space exploration, such as the use of commercial processors, deep neural networks, reinforcement learning, and photorealistic simulators. Conversely, it examines what aerospace robotics is teaching terrestrial engineering, particularly regarding fault tolerance, systemic redundancy, navigation without GNSS signals, and handling operational exceptions via variable-latency teleoperation.

1. Mathematical and Algorithmic Foundations of Perception and Localization

The intrinsic ability of a robotic agent to three-dimensionally understand its environment and accurately position itself within it is the fundamental prerequisite for any form of autonomy. The methodologies used on Earth and on other celestial bodies differ significantly due to physical, infrastructural, and computational restrictions.

1.1 Global SLAM vs. Local Visual Odometry

In the domain of terrestrial autonomous driving, the navigation architecture relies heavily on Simultaneous Localization and Mapping (SLAM). The SLAM paradigm addresses a classic circular dependency problem in estimation theory. Building an accurate map of the environment requires exact knowledge of the vehicle’s position, but determining that position requires a pre-existing map. Terrestrial vehicles use highly advanced implementations like Fast-SLAM, employing extended Kalman filters to maintain local map uncertainties, thereby reducing computational complexity for real-time applications.

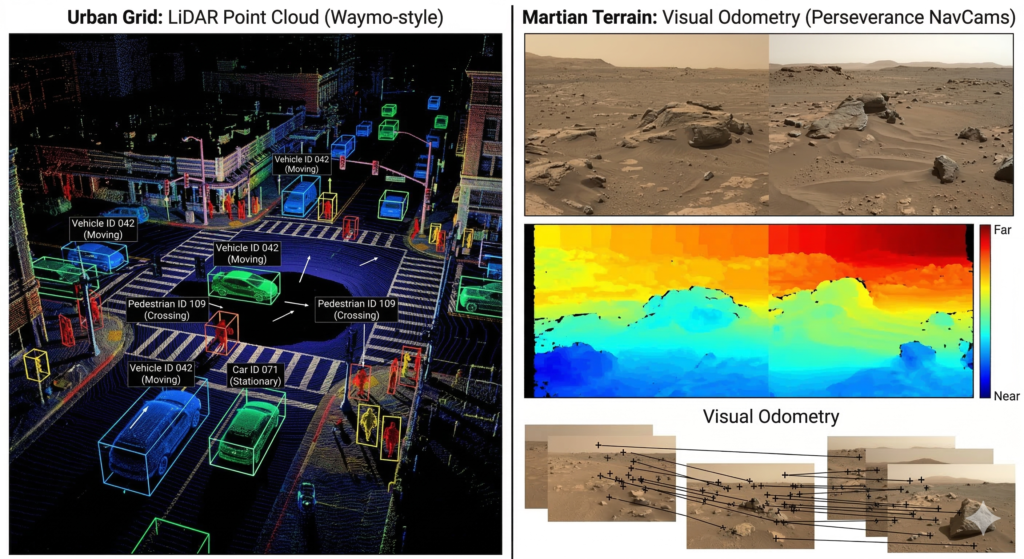

In the terrestrial context, SLAM is inherently global. The system actively seeks “loop closure” to correct cumulative drift by recognizing previously visited locations, a vital feature in urban environments or tunnels where GPS signals are degraded. Advanced setups also use feature-based approaches like Lidar Odometry and Mapping (LOAM) or Fast Global Registration, generating three-dimensional voxel grids.

Conversely, historical Martian rovers like Spirit and Opportunity relied almost exclusively on Visual Odometry (VO). Unlike global SLAM, VO is a strictly local and incremental estimation technique. The Mars Exploration Rovers updated their 6-DOF pose estimation at a frequency of 8 Hz by tracking the movement of salient terrain features between pairs of stereo images. VO guarantees fundamental local consistency to avoid imminent collisions, but it does not maintain a global map or attempt to close topological loops. This lightweight approach was historically mandatory due to the strict limitations of 20 MHz rad-hard processors.

1.2 The Critical Evolution of Sensor Fusion

Perceptual robustness in any autonomous system inevitably stems from sensory redundancy and sensor fusion. In the automotive sector, sensor fusion combines heterogeneous data from high-resolution cameras, LiDAR, and Radar. This heterogeneous mix is absolutely necessary because each sensor has intrinsic limits. Cameras struggle in low light and glare, LiDAR is degraded by dense precipitation, and Radar lacks the semantic resolution to distinguish fine details.

On the surface of Mars or the Moon, perceptual challenges are exacerbated along different lines. The main problems for visual systems are blinding sunlight, deep and sharp shadows devoid of terrestrial atmospheric diffusion, and ubiquitous abrasive dust. Extreme, unfiltered lighting drastically increases the mismatching rate of stereoscopic vision algorithms. Furthermore, the lack of texture in vast sandy regions renders traditional visual triangulation ineffective.

Strict mass, volume, and power limits have historically precluded the use of LiDAR for dynamic navigation on rovers, forcing reliance on passive stereo vision. However, recent missions are beginning to converge toward terrestrial vehicle models. For example, Lunar Outpost’s MAPP lunar rover integrates a Time-of-Flight depth camera developed by MIT to improve depth perception and real-time localization. Optimal methodologies currently in development for planetary exploration involve multi-factor graph optimization models, placing the inertial measurement unit odometry node at the architectural center.

1.3 Semantic Segmentation and Automatic Terrain Classification

Semantic segmentation is a highly mature technology in terrestrial autonomous vehicles. This maturity was achieved by training Convolutional Neural Networks (CNNs) on massive, meticulously annotated urban datasets like Cityscapes. In space, semantic segmentation plays an equally vital role in safety, used to dynamically distinguish traversable bedrock, treacherous fine sand, impassable boulders, and the sky background.

The primary limitation in directly applying these techniques in space is the lack of massive volumes of pixel-level annotated training data from target worlds. Aerospace engineers employ transfer learning and domain adaptation, initially training models on datasets from terrestrial analogue landscapes. An excellent example of overcoming this scarcity is the Jet Propulsion Laboratory’s “AI4Mars” crowdsourcing project. Thousands of volunteers labeled Martian images, creating a dataset that trained a machine learning-based terrain classifier subsequently used by Curiosity and Perseverance.

2. The Computational Gap: Computing Architectures and Radiation Resilience

The fundamental bottleneck separating the cognitive capacities of a commercial autonomous car from a state-of-the-art Martian rover is strictly hardware-based. The disparities in pure computing power are staggering and stem from a ruthless environmental constraint: the extreme radiation environment of space and severe thermal and energy budget limits.

2.1 Terrestrial COTS Processors vs. Radiation-Hardened Devices

In terrestrial vehicles, the commercial push toward full autonomy has funded the development of specialized System-on-Chips (SoCs) capable of delivering computing power measured in thousands of TOPS (Tera Operations Per Second). By contrast, highly successful rovers like Curiosity and the main computing platform of Perseverance are governed by the RAD750 processor. Based on decades-old architecture, this hardware offers zero native AI acceleration and runs at incredibly low wattages.

The use of this seemingly obsolete hardware is an absolute necessity for survival. Radiation-hardened (rad-hard) components are meticulously designed to ensure that processing is not interrupted or corrupted by high-energy cosmic radiation or solar flares. The primary risk is Single Event Effects (SEE), including Single Event Upsets (SEU) where an energetic particle flips a memory bit. On Earth, a vehicle experiencing a glitch can safely pull over; in deep space, a system crash during landing or a critical maneuver results in the loss of a multibillion-dollar mission.

2.2 Rad-Hard vs. Rad-Tolerant in the “New Space” Era

The need to balance extreme safety with higher performance has led to the distinction between Rad-Hard and Rad-Tolerant devices. Fully rad-hard electronics are designed to minimize both transient and destructive events. However, their performance limitations make running modern AI frameworks impractical.

Rad-tolerant technologies, widely used for Low Earth Orbit (LEO) satellite constellations, resist transient SEEs but are more susceptible to non-destructive events mitigated through system-level safeguards like logical redundancy and error correction codes. Since LEO orbits experience significantly lower radiation exposure than deep space missions, using rad-tolerant Commercial Off-The-Shelf (COTS) components is advantageous. Yet, for planetary exploration, susceptibility to destructive events remains an insurmountable criticality without radical architectural interventions.

3. The Injection of COTS Computing and Hybrid Space Architectures

The inability to run modern perception algorithms on limited processors has catalyzed research into safely integrating COTS components into spacecraft. Because commercial CPUs and GPUs possess exponentially higher compute densities, the current aerospace paradigm attempts to immunize them through software barriers or hybrid architectures.

3.1 Triple Modular Redundancy (TMR) and Software Protections

A historical but continuously refined approach is Triple Modular Redundancy (TMR). In this paradigm, input data, logical calculations, and RAM storage are physically or logically triplicated and processed in parallel in watertight compartments. The final results undergo algorithmic voting. If cosmic radiation causes a fatal bit-flip in one unit, the majority vote of the other two healthy units dynamically masks the error in real time.

Software protection efficiency is reaching notable sophistication with neuro-aware frameworks like RedNet, designed for Deep Neural Network inference aboard satellites. By exploiting the heterogeneous vulnerability to SEU errors across various neural network layers, RedNet provides targeted radiation tolerance. This suppresses the lethal influence of radiation errors while accelerating onboard DNN inference speed with negligible overhead.

3.2 The ScOSA Architecture: Synergy Between Terrestrial GPUs and Rad-Hard CPUs

The most advanced implementation of the hybrid concept is the Scalable On-board Computing for Space Avionics (ScOSA) architecture. ScOSA uses a two-module design pairing Reliable Computing Nodes (rad-hard modules for critical tasks) with High-Performance Nodes housing commercial COTS GPUs, often utilizing the NVIDIA Jetson ecosystem found in terrestrial automation.

Within ScOSA, the Jetson GPU handles highly computationally intensive AI operations like environmental perception and robotic manipulation. The rad-hard co-processor acts as a relentless supervisor, constantly monitoring the GPU’s health through heartbeat signals and watchdog timers. If the neural software is corrupted by an SEFI, the reliable node triggers a chain of escalation, ranging from task restarts to re-flashing the entire GPU software layer from protected ROM.

4. Evolution of Navigation Paradigms and Autonomy Control

The operational transition from strict step-by-step command execution to fluid decision-making autonomy is where conceptual cross-pollination with terrestrial vehicles is most manifest.

4.1 From “Stop-and-Go” to “Thinking-While-Driving”

The Curiosity rover utilized a version of AutoNav heavily constrained by hardware bottlenecks. At each decision phase, Curiosity imposed a complete translation halt to capture and analyze stereo images. This “stop-and-go” model drastically limited its autonomous traversal speed to about 20 meters per hour.

The generational leap is embodied in ENav (Enhanced Autonomous Navigation), the system adopted by the Perseverance rover. ENav introduced a brilliant two-stage path-selection approach that optimizes the search for the best theoretical route while managing computational limits. Coupled with an FPGA-accelerated vision coprocessor, Perseverance achieved a vital functional attribute: “thinking while driving.” Rather than stopping at every step, the rover dynamically reflects on the 3D environment while its wheels keep turning, unlocking speeds up to 120 meters per hour.

4.2 Beating the Drift: Autonomous On-Board Global Localization

The unresolved focal problem of pure Visual Odometry is the relentless accumulation of positional uncertainty and trajectory drift. Operating in GNSS-denied contexts, the absolute position estimation degrades over time. Historically, JPL cartographers had to manually relocalize the rover against orbital maps at the end of each drive to reset this uncertainty.

To overcome this, Perseverance engineering teams implemented “On-Board Global Localization,” a technology inherited from terrestrial autonomous vehicles and warehouse fleets. The system uses the “Censible” algorithm to formulate rover localization as a top-down image registration problem. It compares binocular descriptors derived from the rover’s proximal images against pre-loaded orbital satellite maps. This process achieves sub-metric convergence without aberrant error spikes, stabilizing the rover’s position within a 0.5-meter margin.

4.3 Deep Reinforcement Learning (DRL) and Holistic End-to-End Control

In terrestrial automotive research, the leading conceptual wave is abandoning compartmentalized pipelines in favor of “End-to-End” driving powered by Deep Reinforcement Learning (DRL). Rather than splitting tasks into identification, tracking, and steering nodes, an End-to-End model processes raw visual input directly into motor responses via complex neural networks.

The technological outputs of this paradigm are reshaping autonomous exploration and motion planning in aerospace. Recent development projects focus on behavioral policies learned through neural networks that map depth camera images and LiDAR patterns to achieve an ideal kinematic plan. Furthermore, DRL training allows lunar rovers to manage catastrophic scenarios. If a rover suffers an irreversible physical collapse of a motor, the reinforcement learning system can dynamically recalibrate, altering its policy in fractions of a second to compensate for the lack of torsional symmetry and maintain spatial mobility.

5. On-Board Intelligence and MAARS Analytics: Scientific Triage

The concept of Edge Computing, where heavy decision-making analysis occurs at the vehicle’s sensory level rather than relying on a delayed response from a central remote server, manifests in space rovers through initiatives like MAARS (Machine learning-based Analytics for Autonomous Rover Systems).

The immense flow of data produced by onboard multispectral instruments dramatically exceeds the physical limits of trans-planetary bandwidth. To solve this, JPL introduced the “Drive-By Science” concept, implementing visual analytical algorithms for scientific triage. It natively uses hybrid combinations of Deep Learning, associating CNNs with Long Short-Term Memory connections. By understanding the chemical and semantic value of rock formations in real time, the rover prioritizes telemetry, sending only the most valuable sequences back to Earth.

6. Industrial Synergies: Automotive Meets the Planetary Frontier

The practical overlap between these engineering industries is structured solidly through massive operational synergies and strategic agreements between automotive manufacturing giants and global aerospace consortiums.

6.1 Toyota Lunar Cruiser

The most eloquent emblem of terrestrial influence on lunar repopulation programs is the “Lunar Cruiser,” a joint research project between JAXA and Toyota Corporation. Designed to protect future crews on long-range missions, this pressurized vehicle integrates hydrogen Regenerative Fuel Cell technology perfected on Toyota’s commercial chassis.

Even more innovative is the inclusion of autonomous driving expertise. Implementing the independent-axle dynamics characterizing modern multi-motor EV systems, Toyota provides technologies for stochastic terrains. Algorithms like active quadrilateral torque vectoring counteract phenomena with high rollover risk in reduced gravity environments.

6.2 Lockheed Martin and General Motors: Lunar Mobility Vehicle

Lockheed Martin has established an alliance with terrestrial electrification pioneer General Motors to develop the Lunar Mobility Vehicle (LMV) for the Artemis commercial competitions. GM brings historic prestige as the original partner for the Apollo Lunar Roving Vehicle.

The lunar locomotion infrastructure will exploit a cybernetic configuration conceived largely by automotive autonomy logic. The LMV will use advanced autonomous protocols to reposition itself remotely on the lunar plains, moving from dark zones to pre-established approach points long before the astronauts arrive. During crew downtimes, the hybrid rover platform will become a remote-controlled multi-use robot for geological sampling.

6.3 Lunar Outpost and GNSS-Denied Systems

Commercial “New Space” companies are aggressively pushing autonomous systems. Lunar Outpost will deploy the Mobile Autonomous Prospecting Platform (MAPP) using off-road architectures described as hybrids of Dune Buggies and high-capacity industrial trucks.

In a GNSS-denied environment, these missions will test extreme optical perception tools like Ranger, originally applied to terrestrial self-driving fleets. Ranger executes robust relative positioning within planimetric grids on the lunar substrate using industrial cameras and low-cost hardware, representing a powerful bottom-up approach to space navigation.

6.4 Reverse Transfer (Space to Terrestrial Metropolis)

The massive transfer of know-how is reciprocal. The chronic limit gripping terrestrial SAE Level 4/5 autonomous driving lies in edge cases—unpredictable urban situations like hidden construction sites or complex human gestures. When a neural network intercepts these radical semantic incongruences, it stalls.

Nissan North America, allied with NASA Ames Research Center, conceptualized the SAM (Seamless Autonomous Mobility) architecture. This system draws entirely from software tested by planetary rovers dealing with delayed transmission latency. Integrated into terrestrial electric vehicles, SAM allows remote human operators to map alternative strategic routes on monitors when robotaxis stall, sending vector packages back to the vehicle to smoothly bypass the crisis.

7. The Imperative Role of Photorealistic Computational Simulation

The cognitive training and rigorous cybernetic testing of complex autonomous vehicular systems impose monumental challenges in data generation and collection. Physically subjecting millions of prototype cars to real-world driving risks catastrophic accidents and costs. In space development, opportunities for correction fail miserably beyond the stratosphere.

Consequently, both multinational automotive corporations and planetary agencies depend entirely on high-fidelity virtual simulations. Automotive companies rely on platforms like NVIDIA DRIVE Sim and CARLA, generating endless visual inputs and edge cases via real-time ray tracing to feed industrial data flywheels.

Aerospace flight engineers exploit similar software among Robot Operating System platforms. Tools like Gazebo and NVIDIA Isaac Sim provide formidable replication of not only visual friction but the intrinsic kinematic distortions caused by reduced lunar gravity and soft regolith. Thanks to computational realism, architects of spatial perception can educate the consciousness of robotic AIs in virtual horizons before a single steel footprint is left on the sediments of an alien world.

Lascia un commento