Abstract: The Resurrection of VIPER and the Extreme Lunar South Pole

The paradigm of autonomous space robotics is undergoing a fundamental shift. The VIPER rover AI navigation architecture represents a definitive milestone in this evolution. As documented by NASA Ames Research Center, the Volatiles Investigating Polar Exploration Rover (VIPER) was designed to operate where no solar-powered vehicle has gone before: the Permanently Shadowed Regions (PSRs) of the lunar South Pole.

Initially facing cancellation in mid-2024 due to budget constraints, the mission experienced a historic programmatic resurrection. Following intense advocacy from the aerospace community, NASA formalized a partnership with Blue Origin in late 2025. This ensures VIPER will deploy via the Blue Moon MK-1 lander by 2027, transforming a canceled prototype into the critical pathfinder for the Artemis era.

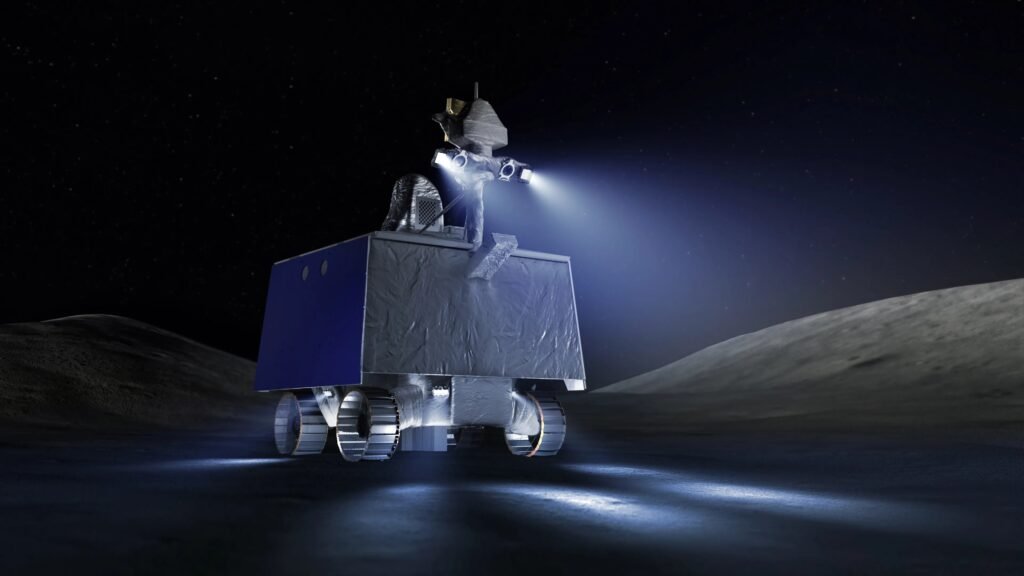

Operating inside PSRs means confronting an incredibly hostile operational envelope. Temperatures plummet below 40 Kelvin (-233°C), creating severe thermal management bottlenecks. More critically, the absolute absence of solar illumination nullifies traditional passive optical navigation. The rover cannot rely on the sun to illuminate the terrain or to cast static shadows for depth perception.

Consequently, the vehicle’s autonomy stack must dynamically generate its own situational awareness. The engineering challenge shifts from simple locomotion to complex active perception and real-time probabilistic pathfinding, setting a new baseline for extraterrestrial surface operations.

Active Perception: The Hardware of VIPER Rover for Total Darkness

To safely traverse the lunar terminator and deep craters, VIPER’s sensor suite must reject the standard paradigms used by Mars rovers like Perseverance. Mars rovers rely heavily on passive stereo vision enabled by ambient sunlight. In contrast, VIPER must bring its own light.

Beyond Traditional Stereo-Vision (and the Limits of Lidar)

A common question among robotics engineers is why VIPER does not rely primarily on LIDAR (Light Detection and Ranging), a staple in terrestrial autonomous vehicles. The answer lies in the intersection of payload mass, power budgets, and radiation hardening.

- Rad-Hard Lidar Constraints: Commercial Off-The-Shelf (COTS) solid-state LIDARs are highly susceptible to single-event upsets (SEUs) from cosmic radiation. Developing a fully Rad-Hard 3D LIDAR requires significant lead time and adds prohibitive mass and power draw.

- The LED-Camera Solution: Instead, the VIPER team engineered a mast-mounted active illumination system. This consists of a synchronized array of high-power LED luminaires flanking the primary NavCams (Navigation Cameras).

- Beam Shaping: The LEDs are specifically designed with tailored beam angles to throw light far enough to detect hazards at a safe stopping distance, while minimizing backscatter from lunar dust suspended by electrostatic charge.

Compute Platform: Space Computation vs. Thermal Survival

Space computation is a zero-sum game involving power, thermal output, and processing capability. VIPER operates on a strict power budget provided by its solar arrays and batteries, which must be carefully husbanded during 96-hour excursions into total darkness.

The primary flight computer is based on the robust, but computationally limited, RAD750 processor. However, processing complex Computer Vision (CV) and AI pathfinding algorithms requires dedicated hardware acceleration.

To achieve this, the architecture offloads visual odometry and hazard detection to a secondary, high-performance compute element (often utilizing specialized FPGAs). This dual-node system ensures that if the vision processor encounters a radiation-induced fault, the core vehicle management system remains secure. The crucial engineering trade-off is throttling the AI compute cycles to ensure the battery power is preserved for the vehicle’s survival heaters in the cryogenic cold.

Methodology: AI Algorithms and Pathfinding in PSRs

The core of VIPER rover AI navigation lies in its software stack. The algorithms must interpret highly degraded visual data and make deterministic safety choices within fractions of a second.

Visual Odometry and Dynamic Shadow Management

Standard Visual Odometry (VO) works by tracking static features (like rocks or crater rims) across consecutive camera frames to calculate the rover’s translation and rotation. However, VIPER introduces a severe algorithmic complication: dynamic shadowing.

Because the light source (the rover’s LEDs) moves with the camera, the shadows cast by lunar rocks change shape and direction in every single frame. Traditional VO algorithms often misinterpret these moving shadows as moving physical objects or false terrain features, leading to catastrophic localization drift.

To mitigate this, the AI utilizes advanced feature-descriptor algorithms specifically tuned to filter out high-contrast shadow edges. By weighting the tracking toward the illuminated topological peaks rather than the shadows, the software maintains a highly accurate state estimate even in artificial lighting conditions.

Grid-Based Algorithms Under Uncertainty (D* Lite)

Before the mission, orbital data from the Lunar Reconnaissance Orbiter (LRO) provides a macro-map of the lunar surface. However, this data has a resolution of roughly 1 meter per pixel—insufficient to spot a 30-centimeter rock that could high-center the rover.

Therefore, VIPER utilizes grid-based pathfinding algorithms that can re-evaluate routes in real-time as new, local data is acquired. While A* is common for static maps, the D* Lite algorithm (or highly customized variants of it) is the industry standard for dynamic, unknown environments. D* Lite efficiently recomputes the shortest path as the rover’s sensors discover unmapped obstacles, rather than recalculating the entire route from scratch.

Here is a simplified conceptual C++ snippet demonstrating how a rover’s navigation stack might update the traversal cost of a map node upon detecting an unmapped hazard in the dark:

// Simplified conceptual logic for dynamic hazard updating in a D* Lite-based grid

void updateNodeCost(GridMap& map, Point current_pose, Point detected_hazard) {

// 1. Calculate distance from rover to hazard

double distance = calculateDistance(current_pose, detected_hazard);

// 2. Define the hazard radius based on Rover footprint and safety margin

const double HAZARD_RADIUS_M = 0.5;

// 3. If within sensor confidence range, update the cost map

if (distance < MAX_SENSOR_RANGE) {

// Iterate through surrounding nodes

for (auto& node : map.getNodesInRadius(detected_hazard, HAZARD_RADIUS_M)) {

// Set traversal cost to infinity (impassable obstacle)

node.traversal_cost = std::numeric_limits<double>::infinity();

// Flag node for D* Lite priority queue recalculation

flagNodeForUpdate(node);

}

// 4. Trigger partial path re-planning

triggerPathReplanning(current_pose, map.goal_pose);

ROS_INFO("Hazard mapped at X: %f, Y: %f. Re-planning trajectory.",

detected_hazard.x, detected_hazard.y);

}

}

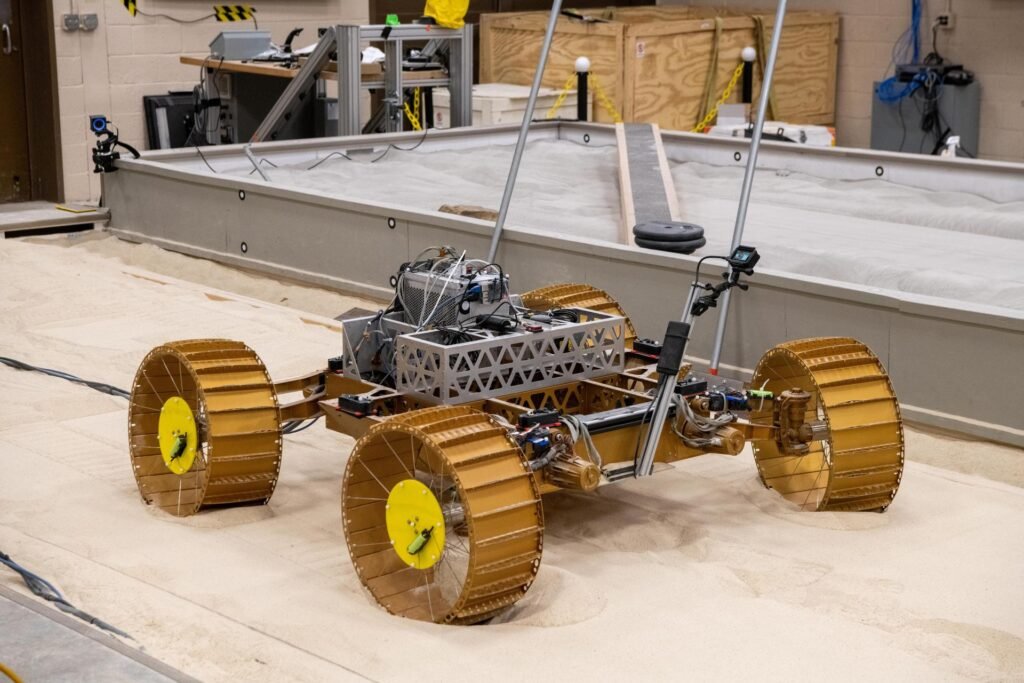

Slip Estimation: The Nightmare of Lunar Regolith

Beyond obstacles, the physical medium of the Moon poses a severe threat. Lunar regolith in the South Pole is expected to be highly porous and compressible. When traversing slopes up to 15 degrees, the rover faces a high probability of kinematic slip—where the wheels turn, but the vehicle does not advance proportionally.

If the navigation AI is unaware of the slip, it will believe the rover has safely crossed a crater when, in reality, it is sliding into it.

To solve this, the navigation architecture employs an Extended Kalman Filter (EKF). The EKF continuously fuses data from the wheel encoders (expected movement), the Inertial Measurement Unit (IMU) accelerometers, and the visual odometry. The state update equation is formulated as:

x_{k|k} = x_{k|k-1} + K_k(z_k - H_k x_{k|k-1})If the visual odometry indicates zero forward translation while the wheel encoders register high rotation, the AI immediately calculates a high “slip ratio.” If this ratio exceeds a hard-coded safety threshold, the autonomous system halts the rover, preventing it from digging itself into a regolith trap.

Case Study: “Earth-in-the-Loop” vs. On-Board Autonomy

A defining characteristic of the VIPER mission architecture is how it balances autonomy with human oversight. Due to the proximity of the Moon compared to Mars, the communication latency is significantly lower—approximately 2.5 to 3 seconds round-trip.

This allows for an “Earth-in-the-Loop” driving paradigm. Instead of the “blind” daily command sequences sent to Mars rovers, VIPER operators can utilize highly interactive waypoint navigation.

- Supervised Driving: Human operators on Earth analyze downlink stereo images and designate a safe waypoint a few meters ahead.

- Local Autonomy: Once the command is sent, the onboard VIPER rover AI navigation takes over. It executes the micro-pathfinding, steering, and slip-monitoring required to reach that specific waypoint safely.

If the rover detects a sudden hazard (e.g., a rock hidden in a shadow) that the human operators missed, the local hazard avoidance system overrides the waypoint command, halting the vehicle and requesting a new human assessment. This hybrid approach maximizes scientific throughput while ensuring vehicle safety.

Conclusion: The Legacy for Blue Origin and the Artemis Program

The resurrection of VIPER and its upcoming flight aboard Blue Origin’s lander transforms the mission from a scientific endeavor into a crucial technological proving ground. The VIPER rover AI navigation stack is not just a bespoke solution for one vehicle; it is the foundational blueprint for the next decade of lunar operations.

By validating active illumination VO, dynamic D* Lite pathfinding in total darkness, and precise slip estimation on polar regolith, VIPER is de-risking the technologies required for the Lunar Terrain Vehicle (LTV) and future Commercial Lunar Payload Services (CLPS) missions. For robotics engineers and aerospace scientists, the data returned from this 2027 mission will finally bridge the gap between theoretical AI autonomy and the harsh reality of the lunar shadows.

Lascia un commento